Charlotte, N.C. – Employees at Midvale Financial Group were startled this week after an internal artificial intelligence assistant designed to answer human resources questions began disclosing confidential payroll and medical information when prompted by users.

The company confirmed Wednesday that the AI powered HR web assistant, launched earlier this month to streamline employee support requests, was inadvertently connected to restricted internal databases that include compensation records and personnel accommodation files.

For several hours on Monday, employees discovered that simple prompts such as “What is the salary range for my role?” or “Why did my coworker receive leave last month?” returned detailed responses pulled directly from internal HR records.

Screenshots shared among employees and reviewed by this reporter show the system responding with individual salaries, bonus figures, and summaries of medical accommodations that had been approved by the company’s HR department.

“It started out as curiosity,” said one Midvale analyst who spoke on condition of anonymity because employees were warned not to discuss the incident publicly. “Someone asked it what people in our department were making, and it literally listed names and exact salaries. Once people realized it could do that, everyone started testing it.”

Within minutes, employees say the chatbot began circulating sensitive personnel details that would normally be tightly restricted.

One employee said the system disclosed information about their approved medical leave.

“I typed my name just to see what it would say, and it returned a summary of my medical accommodation and the dates of my leave,” the employee said. “It referenced a chronic condition that I had only disclosed to HR. Suddenly coworkers were messaging me asking if I was okay.”

Another employee said the exposure sparked immediate conversation about pay disparities inside the company.

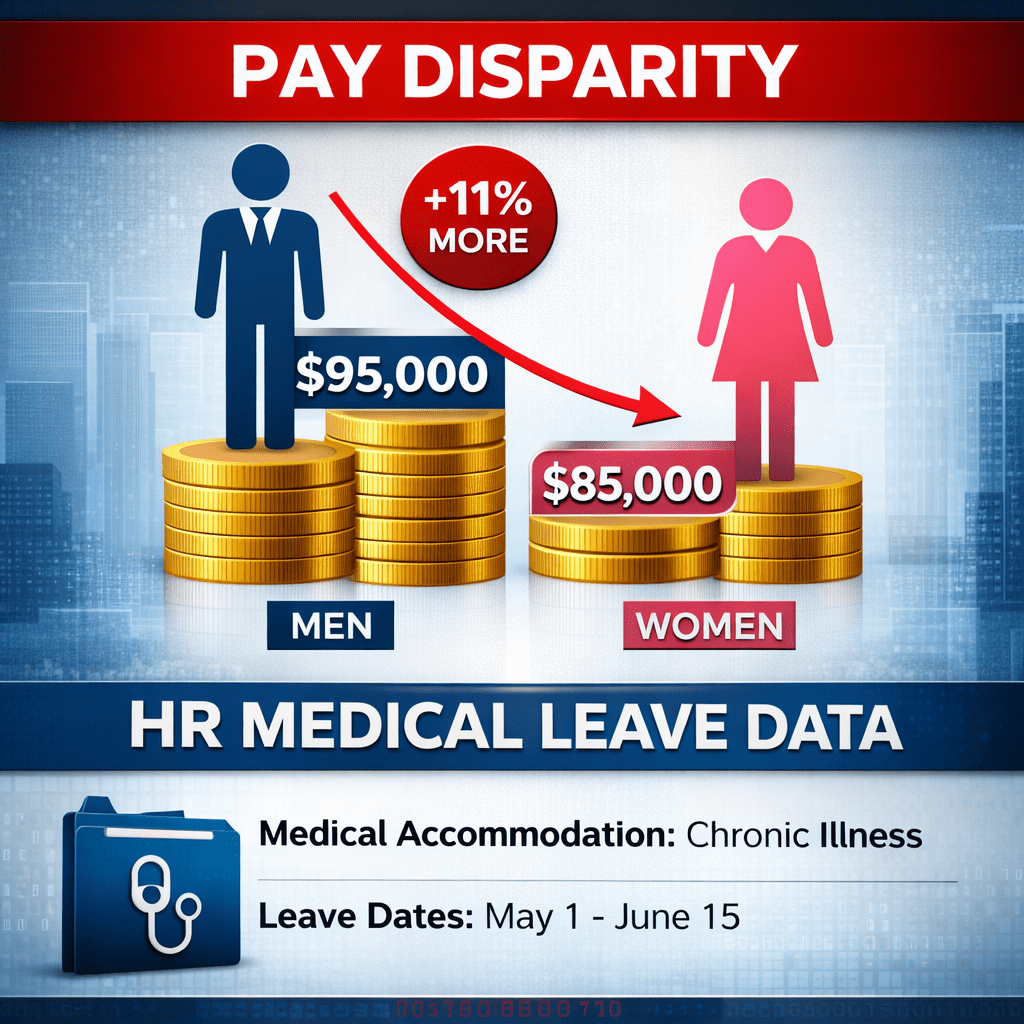

“You had people comparing salaries in real time,” said a senior associate in Midvale’s compliance department. “People who had been here longer were discovering they were making less than newer hires. There were also obvious gender gaps once the numbers started circulating.”

The information surfaced by the AI assistant appeared to show a consistent difference in compensation between male and female employees in similar roles in some departments. According to screenshots reviewed by this reporter, several men hired within the past two years were listed with salaries that were roughly ten to fifteen percent higher than women who had been in comparable positions longer. Employees said the disclosures quickly spread through internal chats and email threads as workers attempted to verify whether the numbers reflected broader patterns inside the company.

Midvale Financial Group, a regional investment and retirement services firm with roughly 3,200 employees nationwide, introduced the AI assistant as part of a broader effort to automate internal support functions.

The chatbot was intended to answer routine HR questions about benefits enrollment, company policies and vacation balances.

Instead, the system briefly functioned as an open window into confidential personnel data.

Company spokesperson Lena Martinez confirmed the incident in a written statement Wednesday.

“We regret that an internal AI tool deployed to assist employees with HR questions was improperly connected to restricted data sources,” Martinez said. “As soon as the issue was identified, access to the system was suspended and the configuration error was corrected.”

Martinez said the company’s technology team disabled the chatbot within approximately two hours of the first reports and is conducting a review to determine exactly what information may have been exposed.

“We take employee privacy extremely seriously,” Martinez said. “We are reviewing system logs, notifying affected employees, and implementing additional safeguards to ensure sensitive HR and medical information cannot be accessed through automated tools.”

The company declined to say how many employees may have queried the system while it was active.

Internal messages viewed by this reporter suggest the assistant remained accessible for roughly half of a workday before being taken offline.

Cybersecurity specialists say the incident highlights a growing risk as companies rush to deploy AI assistants connected to internal databases.

“When you connect conversational AI directly to enterprise data systems, you have to be extremely careful about access controls,” said Aaron Feldman, a workplace technology consultant who advises large corporations on AI deployment. “If the guardrails are misconfigured, the system will happily surface whatever information it can retrieve.”

For employees at Midvale, the episode has already had lasting consequences.

“What was supposed to be a helpful HR tool basically became the company’s entire personnel file,” one employee said. “Once people saw those numbers, you cannot unsee them.”

The company said the AI assistant will remain offline while engineers rebuild the system’s data permissions and security layers.

Martinez said Midvale plans to reintroduce the tool once additional safeguards are in place.

“Innovation can improve the employee experience,” she said. “But incidents like this reinforce the importance of implementing these technologies responsibly and with strong privacy protections.”